It's much easier to hold computers accountable than it is to hold humans accountable

Computers live in a totalitarian surveillance state

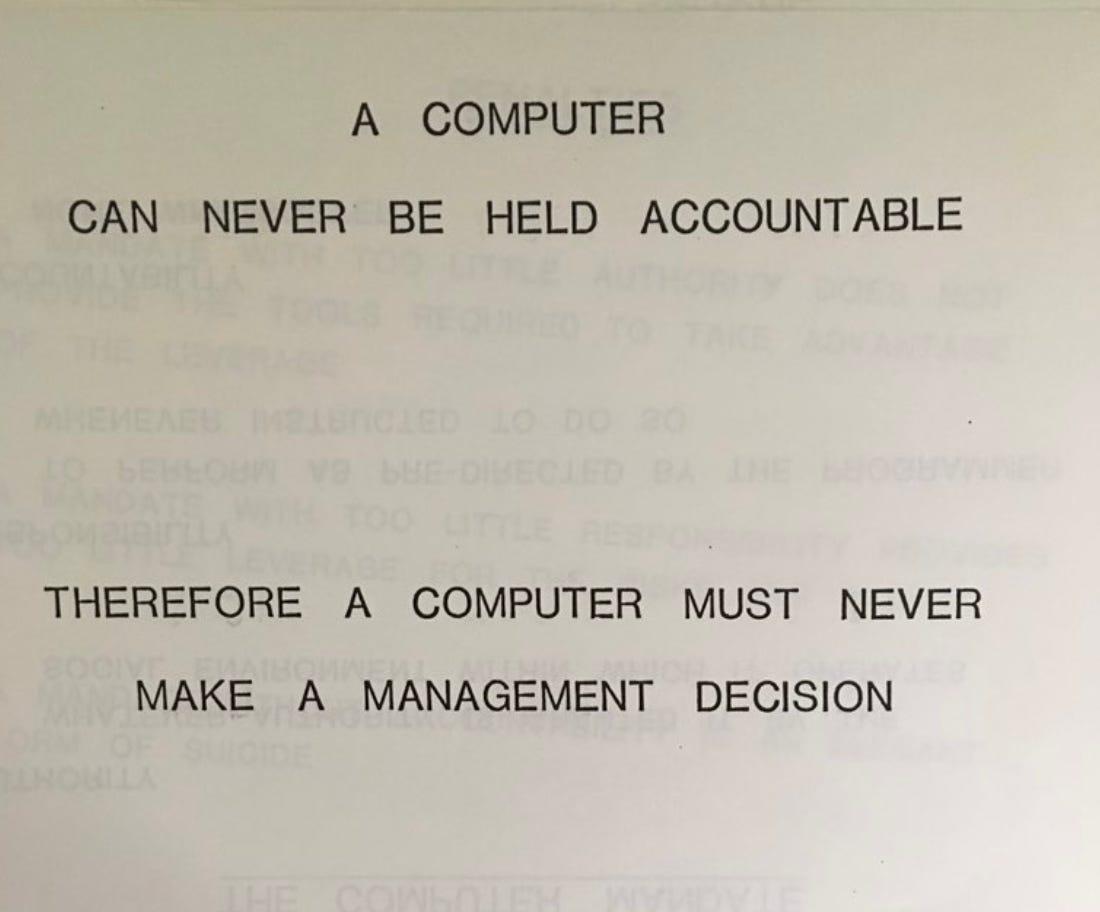

This image has become really popular in response to AI taking off.

It’s been shared in a lot of recent conversations about AI, from AI psychosis to autonomous vehicles to regular bad outputs. Simon Wilson dug into the source here, looks like it’s from an old presentation at IBM.

I think this is a pretty silly sentiment and is basically incorrect. Consider the following statements:

“Bill’s been moving a little slow recently compared to slightly younger people. I think the best move is to kill him and throw his body away and replace him with someone who can move about 0.5 seconds faster on each task.”

“Martha seemed to consistently say incorrect things when we ask her about a topic. I performed neurosurgery on her and discovered the problem in her brain, I rewired it and she’s behaving fine now.”

“I just learned that Andy ran over a squirrel. This is really terrible and I’m re-evaluating everything I think about how safe he is as a driver. Luckily, we keep cameras all around his car 24/7 so we can figure out what happened. I’ll perform neurosurgery on him to make sure this never happens again, and will keep him off the road in the meantime.”

“We have this new service where every day 20 million people talk to 20 million representatives of our company. Unfortunately, we recently found out that a few of our employees are crazy and gave the people they were connected to really bad advice, hyping them up when they asked to be hyped up. We’re going to kill all 20 million employees and replace them all with better employees to get that rate down to zero.”

It seems pretty obvious that we actually:

Hold computers to way way higher standards of accountability than real people.

Are usually much more able to monitor and detect their bad behavior.

Have a much easier time being much more invasive in how we deal with their bad behavior.

I think when people say “A computer can never be held accountable” what they mean is “A computer can never be socially punished for a bad decision.” That’s true, but social punishment is just a means to a goal: better behavior or harm prevention. It’s also a pretty clumsy, often useless tool. Many people are socially punished and still behave terribly. If we had the ability to perform neurosurgery on everyone behaving badly to permanently change their behavior, I think this would be seen as:

Much more effective than regular social punishment.

Deeply evil and totalitarian.

And yet we do the same to computers regularly. Why would we want to “hold computers accountable” when that’s way, way less effective than what we can actually do to them?

I’ve recently seen this image shared a lot in the context of Waymo running over a cat in San Francisco. It seems like human drivers probably run over 75-150 times as many animals when they drive the same distance as Waymo has. Many of these are hit-and-runs. The people whose animals get killed often can’t hold the driver accountable, because the driver speeds away before people can see the license plate. In comparison, it is very very visible when a car is an autonomous vehicle, and the brand is painted all over it. It’s much easier to identify and complain to a massive company. It’s likely that even minor negative media attention will cost them a lot of money, so they have a strong incentive to change their behavior. The cars can be programmed to better detect their surroundings before being let on the road. Meanwhile, human drivers who kill people with their cars are often eventually allowed to continue to drive. If you kill someone while driving drunk in Florida, as long as you don’t have a prior DUI offense, you can eventually drive again! We’re already way, way more lenient with human drivers than we are with AI drivers.

Another funny comparison is AI psychosis. Many, many people have gone crazy using the internet. QAnon, antivax, and getting memed into doing apologia for North Korea are some surprisingly common examples. But that doesn’t stick out to us, because the people are going crazy interacting with content created by other crazy people. These other crazy people get to stay anonymous and never get properly socially punished. But OpenAI can receive huge amounts of condemnation whenever any additional person goes crazy using ChatGPT. Problems with computer applications run by big corporations are much easier to identify, the corporation is easier to pressure to change its behavior, and the applications themselves are easier to reprogram. If only it were as easy to catch all the crazy people online and turn them normal.

There are great reasons to worry about computers having more control in society. Cybersecurity and AI risk among others. But the idea that they’re somehow less “accountable” than people isn’t one of them. Computers shouldn’t be used to dodge accountability, but it’s very hard to do that in general. Unlike humans, it’s fine to keep computers in what’s basically a totalitarian surveillance state and completely reprogram them at the slightest sign of misbehavior. Computers are held to much higher standards of accountability than humans. Let’s keep it that way, but also acknowledge that citizens of totalitarian surveillance states can make better drivers.

One thing people might mean when they say "accountable" is "able to explain its reasoning in making the controversial decision", such as a human driver explaining after the fact what led to them running over a cat. The human’s memory might be partially reconstructed but it’s still grounded in cognitive processes that did occur, whereas an AI giving a similar account would generate a plausible-sounding story with no connection to its actual computation.

Compare this to a world where autonomous vehicles were "built, not grown" and an engineer could point at the line in a program to explain the decision. Of course, we don't know how to build such an autonomous vehicle, but in such a world, not only could an engineer or decision maker be blamed, but also we could have a fix whose robustness we could be confident in.

This is not to say autonomous vehicles shouldn't be on the road, or that all current decisions are explainable (try getting a straight answer from a bureaucracy about why your claim was denied). But I can empathize with people being concerned that we are heading towards an AI-mediated world where things increasingly happen for reasons we can’t explain.

I think the only definition of "held accountable" under which it makes sense to claim that AIs can't be held accountable is if it means something like, "punished in a way that genuinely hurts the one being punished," which is impossible for current AIs because they aren't conscious. I always thought it was pretty nonsensical to care about this definition of accountability. What matters is that you have some way of changing bad behavior, either by encouraging better behavior in the future or by making the misbehaving agent unable to continue their misbehavior. This is the entire purpose of holding people accountable! But this can clearly be done to AIs - in fact, this is just what AI training is. The only reason you would care about the other definition of accountability is if you cared about punishment for its own sake - i.e., retributivism - and you somehow applied this principle event to non-conscious AIs. By why on Earth would getting revenge on a non-conscious being matter intrinsically? And even worse, why would it matter more than actually saving lives? I care a lot more about reducing car deaths than I do about making beings that did not even consciously do anything wrong suffer.