Let's not compare data center heat exhaust to nuclear bombs

Why dropping hundreds of nuclear bombs on Washington DC every day is pretty normal

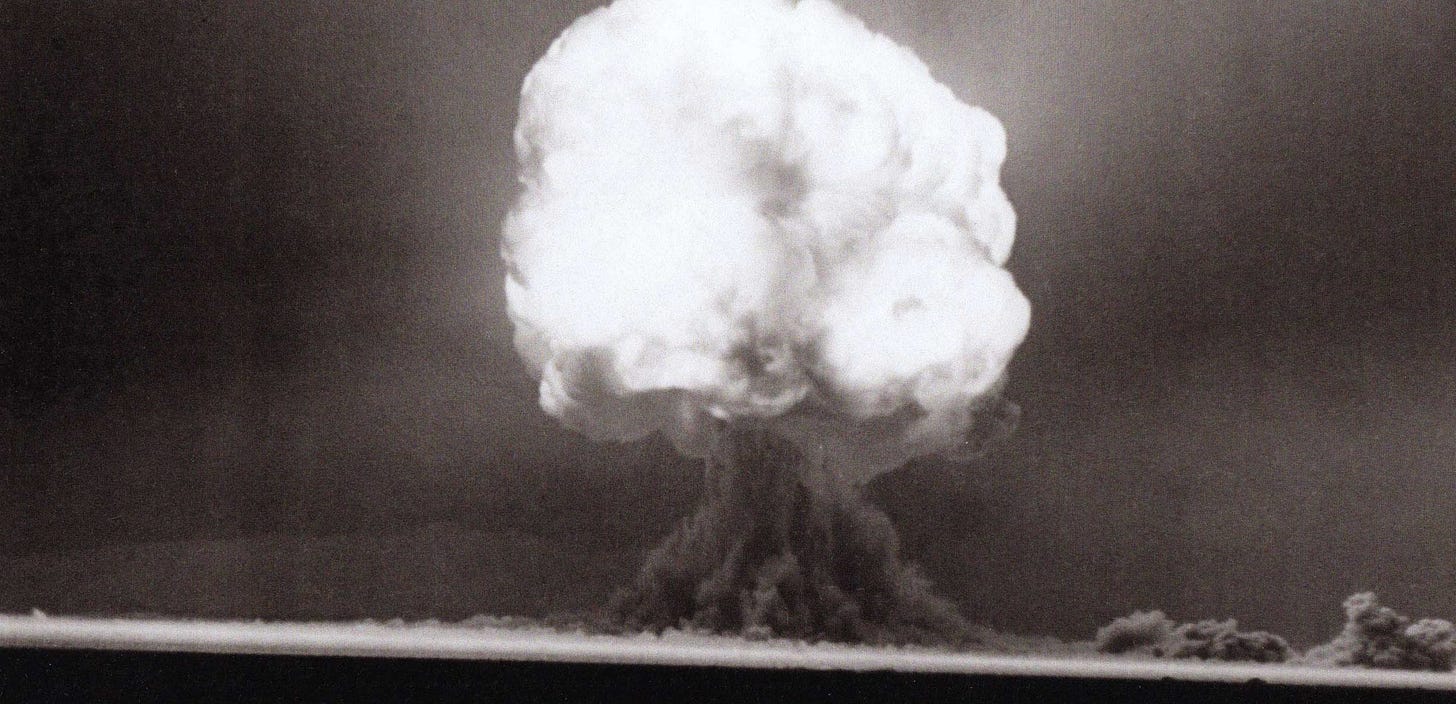

Because data centers use lots of energy in extremely dense computers, they need all the excess heat they generate delivered away out of the building, for the same reason your laptop has a fan built in to prevent overheating. All the energy data centers use eventually becomes heat, and very large data centers use huge amounts of energy, so they generate huge amounts of heat as well and expel it all into the surrounding air. There are plans for data centers that can draw gigawatts of power. Expelling a gigawatt over a day generates 24 billion watt-hours of heat energy. The first ever nuclear bomb, detonated in the Trinity Test, released 27 billion watt-hours of heat energy. So a gigawatt data center releases as much heat energy into the surrounding air as a Trinity Test every single day.

Surely this would create some environmental problems?

Surprisingly, data centers expelling even this massive amount of heat will probably not have a meaningful impact on the local environment, and definitely not on global air temperatures. To understand why, it’s helpful to think about how different the effects of heat are when you concentrate it compared to when you spread it out over a day.

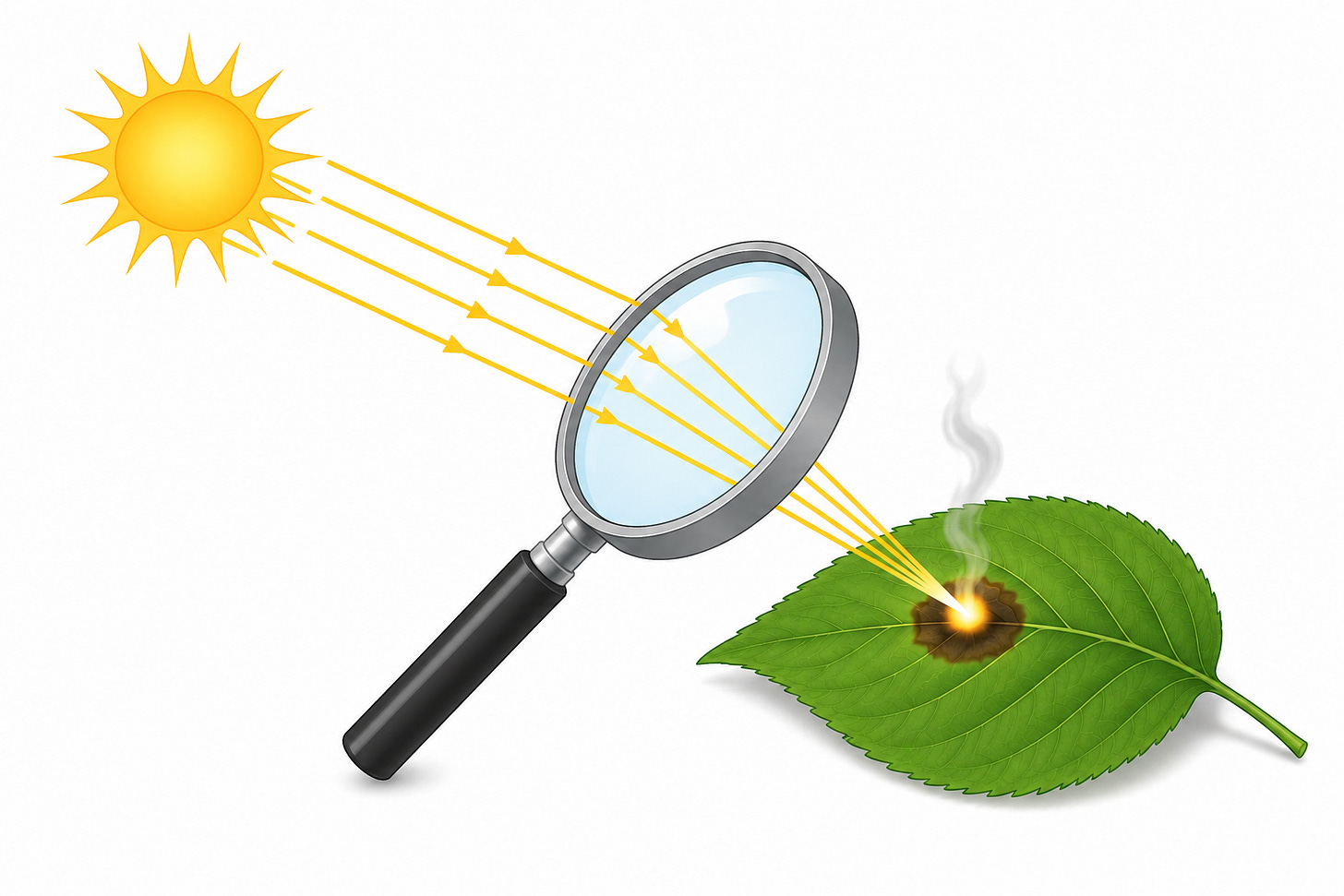

Consider the effects of concentrating sunlight in a single place with a magnifying glass.

This can do a ton of damage or start a fire. But all this heat energy was already there before, it was just impacting a surface area the size of a magnifying glass, instead of a tiny point. If you concentrate a normal amount of heat energy in a very tiny place, it becomes dangerous. And therefore, if you expand a dangerous amount of heat from a hyper concentrated point to a larger area, it can fade into the background of the normal levels of heat we’re exposed to.

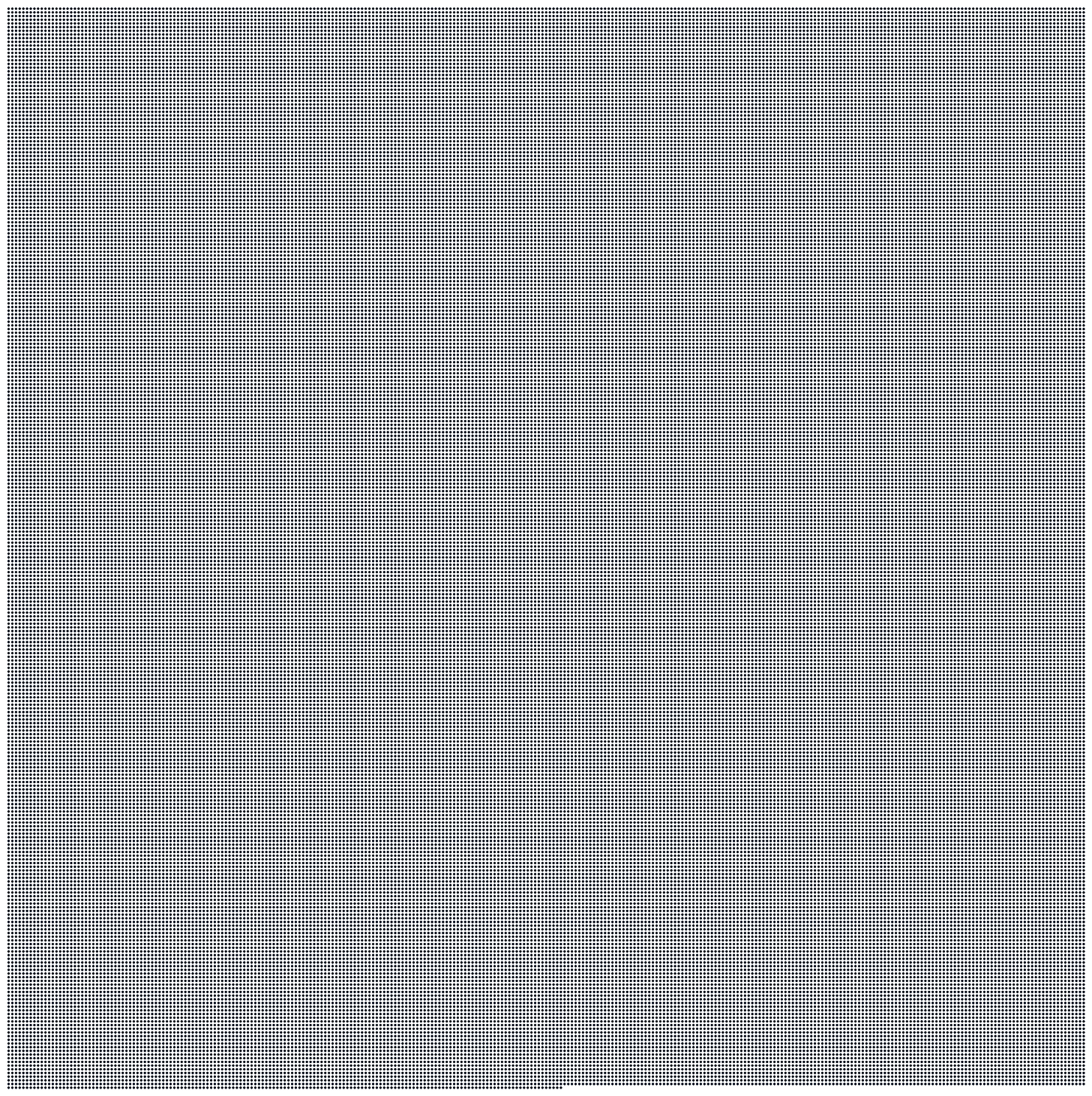

A data center spreads its heat exhaust very evenly across the day. Data centers run at basically constant loads 24/7. There aren’t really peaks or low points. In comparison, the Trinity Test released heat in less than a second and then it all dissipated. The Trinity Test concentrated the energy of a gigawatt data center from a full day to a second, it was at least 86,000 times as concentrated as the data center. Here’s 86,000 dots:

If each of these dots is a second of a gigawatt data center’s heat exhaust throughout the day, all of them together at once is the heat released in the one second the Trinity Test actually happened.

I hope this makes it a little more clear why comparing gigawatt data centers to nuclear bombs is a little goofy.

Because data center exhaust is so flat and consistent, it is basically the very least concentrated way of releasing a given amount of heat energy over time, and a nuclear bomb is the very most concentrated way of releasing the same amount of heat.

In comparison, a magnifying glass concentrates heat about 10,000 times. The physical area the light hits is around 1/10,000th of the original area it would’ve been. The relationship between sunlight concentrated by a magnifying glass and what it would normally do is similar to the relationship between heat concentrated by the Trinity Test and the long slow release of a data center.

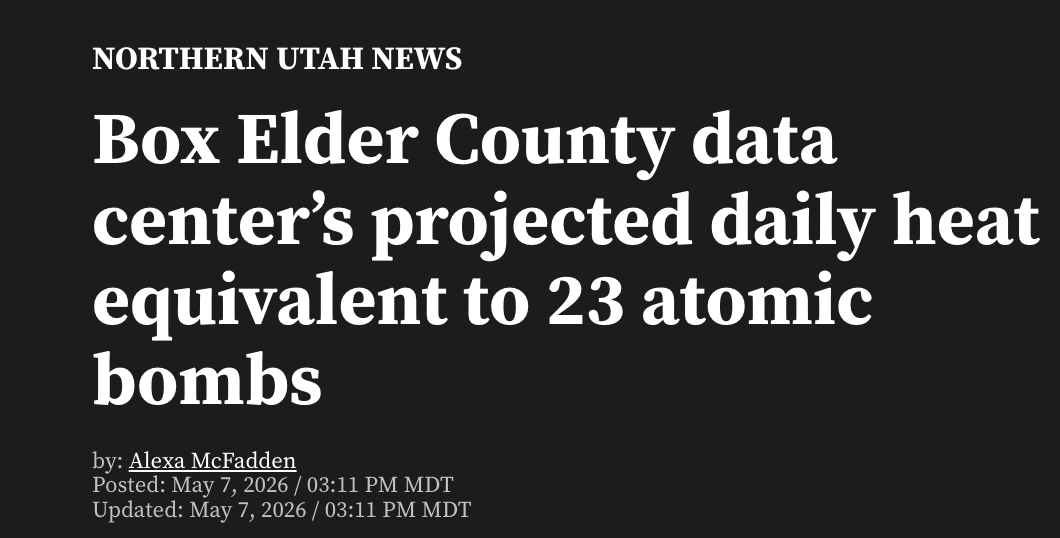

I’ve recently seen news stories reporting heat exhaust in units of nuclear bombs, and I expect this to become more common as more gigawatt data centers are proposed.

This unit gives readers basically as much information as reporting how much sunlight will hit you in a day in units of hyper magnified beams of burning rays. Not only is this the most alarming comparison possible, and is comparing the most hyper-concentrated release of heat possible with the absolute least concentrated release of the same heat, it’s also bad because nuclear bomb heat energy can be tens of thousands of times as large as other nuclear bombs, and the bomb they’re using for comparison is among the smallest ever made.

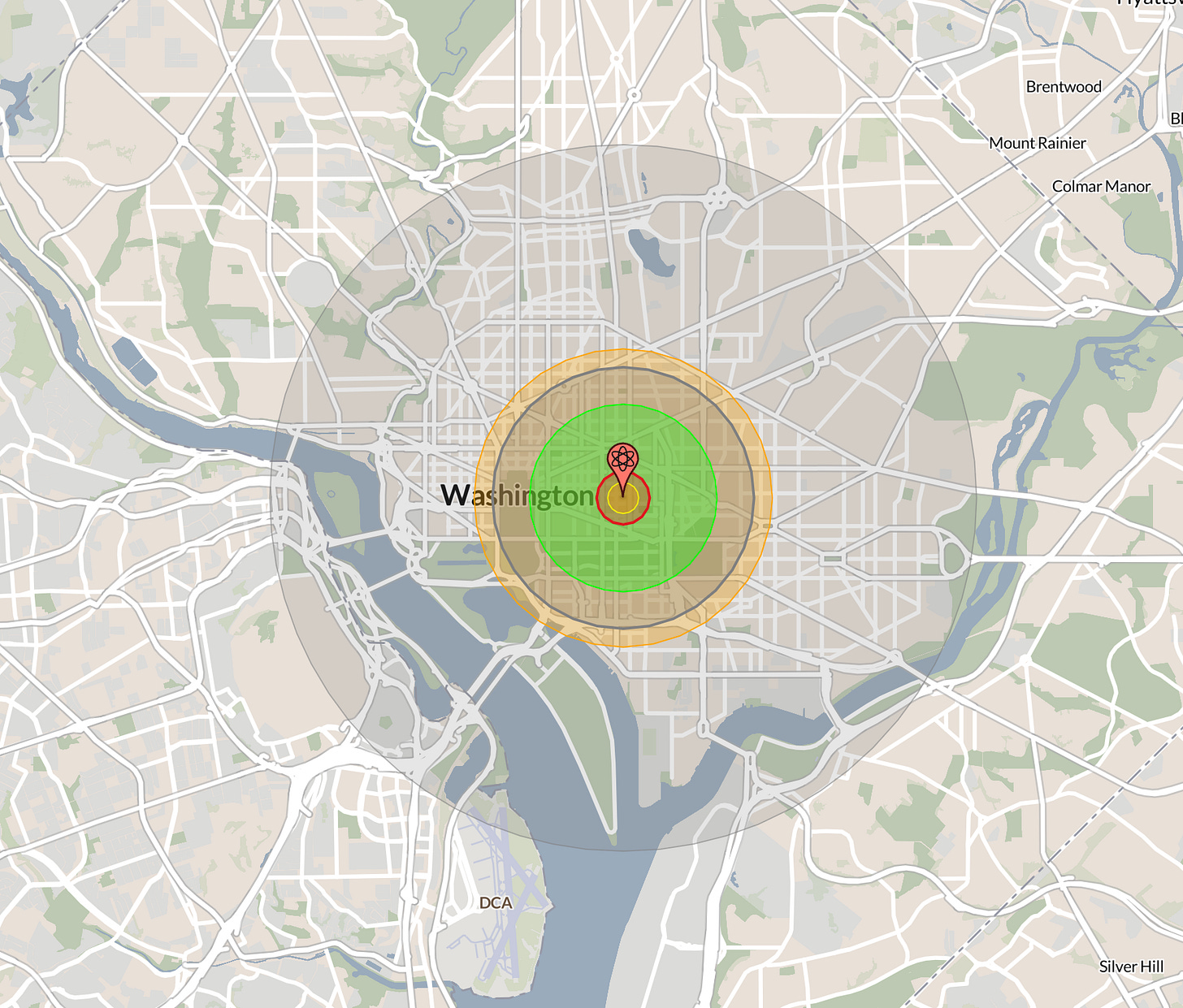

In the stories I’ve seen, the authors are assuming a 16 GW total thermal load will be added by this data center, 9 GW for the data center itself and an additional 7 GW expelled as waste heat by the gas turbines it uses to generate its electricity. This is a reasonable estimate for the very largest this data center could ever be. 16 GW x 24 hours = 384 GWh, divided by 23 atom bombs gives 16.7 GWh per bomb. A GWh is about 0.86 kilotons of TNT, so this is about 14.3 kilotons of TNT. This is about the same as the Little Boy bomb dropped on Hiroshima. While horrible, this is also one of the smallest nuclear weapons ever developed. Using one of my all time favorite data viz tools, NukeMap, here’s what it would look like dropped on where I live:

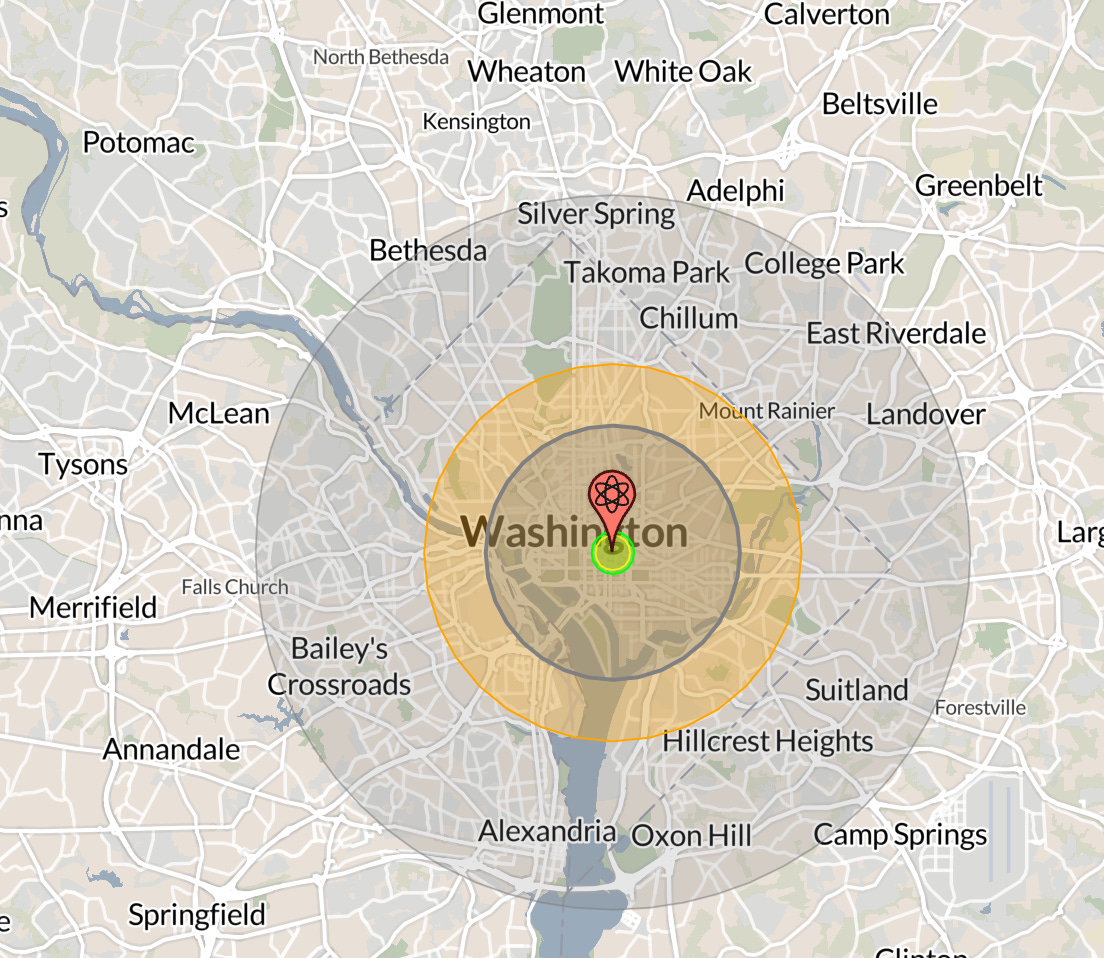

Most nuclear bombs are way, way, way larger than this. Here’s what the average bomb in the US nuclear arsenal looks like in comparison:

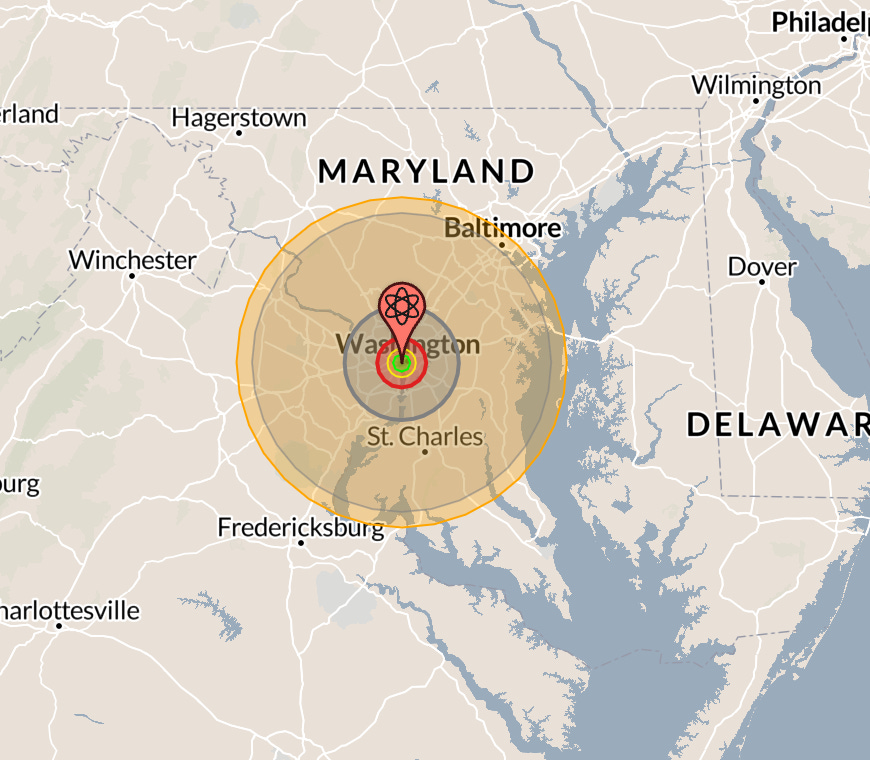

And here’s the largest nuclear bomb ever tested:

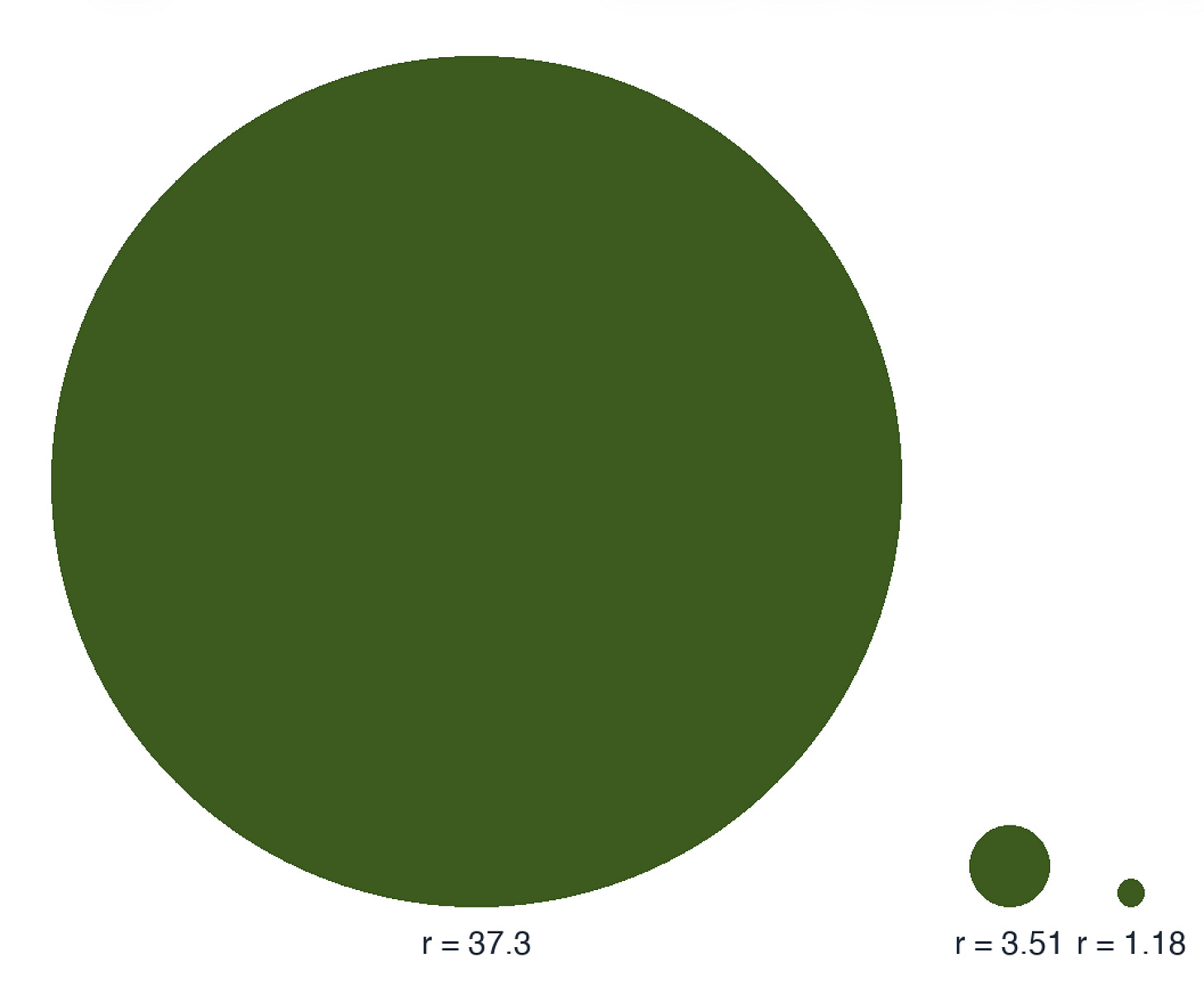

Here’s the thermal radiation radius of each compared to each other:

The largest bomb ever tested was ~50,000 kilotons, 150 times as much heat energy released in a second as this largest ever data center will emit in a day. I could use this number and say that the Utah data center will emit the heat of just 1/150th of a single nuclear bomb, spread over the course of a whole day, and that would also be equally correct, but I think also deceptive. We need a better way of understanding how this heat energy compares to other normal things.

As a former physics teacher, it’s been a mild form of torture hearing people start to say in an alarmed way that all energy data centers use gets turned into heat, as if that’s crazy or wasteful. This is true for everything we do.

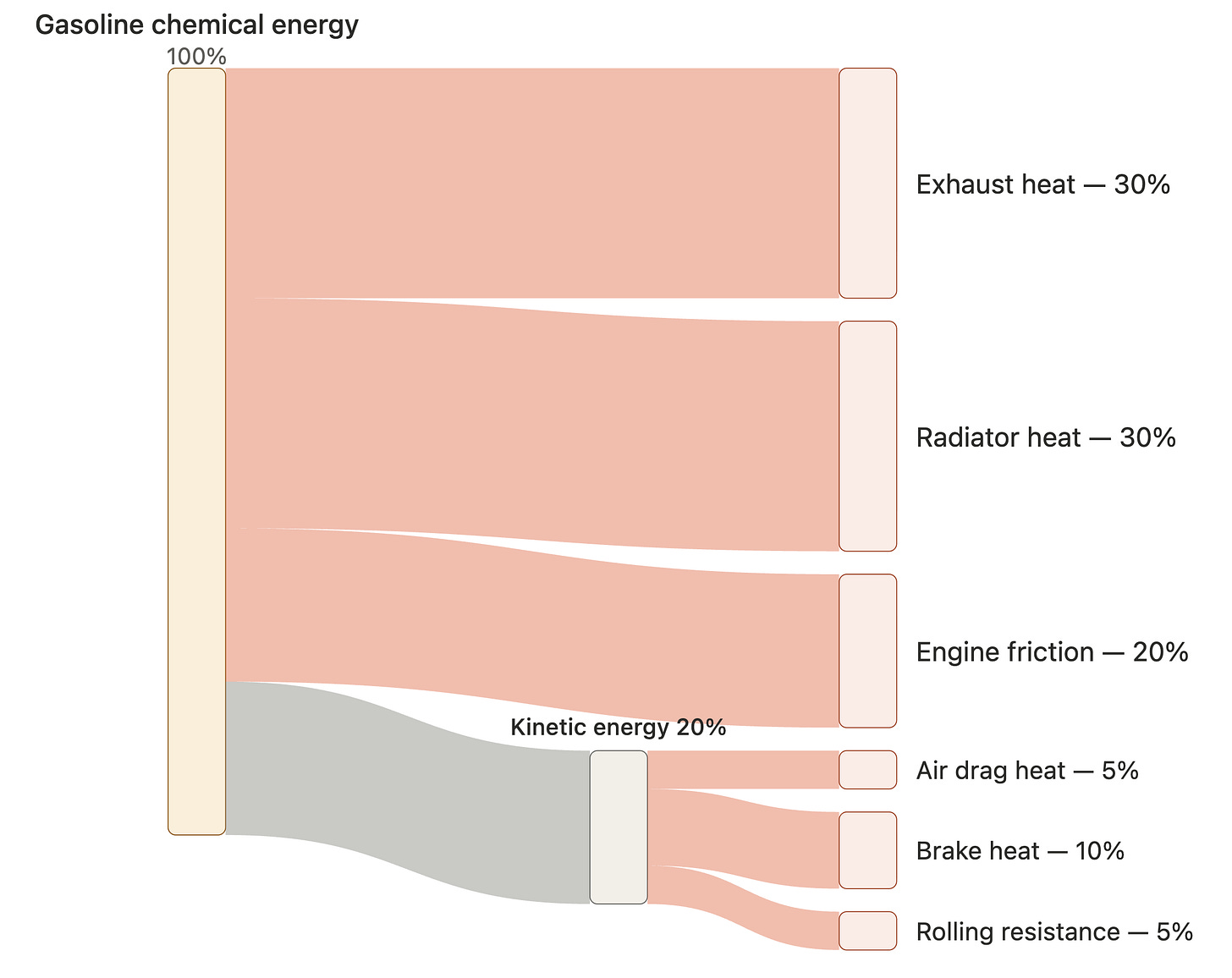

All energy we use is ultimately dissipated as heat in the atmosphere. The gas you fill your car with is full of stored chemical energy. When you drive, about 80% of that chemical energy is converted to heat energy in your engine, and either sent out through your exhaust pipe or radiated away from your engine block and radiator. The other 20% is converted to kinetic energy in your car to make it move (and a tiny amount of that is itself converted to electricity to later be light and sound energy from your car’s display and speakers, or to start your car). When your car impacts air particles, the friction between your car and the air converts some of the kinetic energy from the gas to heat on your car and the air. This isn’t obvious at the speeds we drive, but a space ship returning to Earth through the atmosphere quickly heats up enough to make the friction heating effects of air really obvious. Whenever your car slows as you drive without applying the gas, what’s actually happening is that the kinetic energy is being taken from the car and turned into thermal energy on the car’s surface and the surrounding air. Applying the brakes converts a large amount of kinetic energy quickly into thermal energy on the ground and in your tires. By the time you stop your car, all the energy that was originally stored in the gas has been dissipated as heat, either in the initial explosion of the gas, the friction between your car and the air, or the friction between your wheels and the ground that you use to stop.

Here’s a rough Sankey diagram of how energy moves through your car to eventually become heat:

This means that driving your car adds exactly as much heat energy to the environment you drive it in as slowly burning the gas without a car over the time it takes you to use the full amount.

Whenever you see energy you’re using disappear, it’s almost always becoming heat. The lamps in your home constantly beam out light energy when on, but the room doesn’t get brighter and brighter over time. The light energy doesn’t build up, which means it must be going somewhere else. What's happening is that the light is being absorbed by the physical surfaces in your home. When a photon is absorbed, its energy is transferred into the random jiggling of molecules in what it hits, and that random jiggling is heat energy! So all energy that leaves your lights also becomes heat energy in the space around it. The conversion is from electric energy to light energy to heat energy. Visible light just carries so little energy that we don’t notice.

All energy we use, whether it’s the gas in our cars or the things we do with the electricity we buy, ultimately gets converted to heat energy in our environment. I live in Washington DC, which uses about 28 gigawatt-hours of electricity every day and roughly 37 gigawatt-hours equivalent of primary energy every day in our cars and elsewhere, for a total of approximately 65 gigawatt-hours of energy every day. All of that is being turned into heat in the area, so human energy usage in DC is equivalent to “dropping about 3.7 nuclear bombs on the city every day.”

Does that tell us much? The sun delivers about 750 GWh of heat to DC’s surfaces daily, 43 nukes’ worth and 12x all human activity. About 85% of this leaves the ground and heats the air. About 23% of incoming sunlight is absorbed by air before hitting the ground, adding another 370 GWh dumped into the atmosphere above the city. In total, the sun will deliver about 1000 GWh/day, or 57 nukes.

The water cycle moves enormous amounts of heat too. When water vapor condenses into cloud droplets or rain, it releases the latent heat that was absorbed when the water evaporated. Over DC’s land area, condensing just 1 millimeter of water releases about 108 GWh of heat, or about 6 nuclear bombs. One inch of rain over DC corresponds to about 2,740 GWh of latent heat release, or about 157 nuclear bombs. If I told you that an oncoming rain storm was going to unleash the heat of 157 nuclear bombs, how worried would you be? Would I be representing the situation well? I’d be technically correct, at least.

So now if I tell you that solar energy delivers 56 nuclear bombs to DC every day, human energy usage adds about 3.7 nuclear bombs, and rain can add 150, what does this tell you about life in DC?

I’ve given you no way of understanding how bad this actually is for the DC environment. This is because reporting raw huge amounts of energy like this, with meaningless alarming comparisons like nuclear bombs, gives you zero real footing in the issue at all. Whenever you read something about data centers, always ask “Is the person writing this actually trying to give me real footing in the specific problems this will cause, or just throwing out alarming huge numbers without giving me the context to understand what they mean?” If it’s the second one, maybe just stop reading.

Here’s a comparison that makes more sense to me: this data center is like building a bustling city in the Utah desert. The property it’s on will be about the size of DC, eventually with lots of gas power plants and data centers. The heat emitted by all of these together will be about 6 times the heat emitted by the cars, businesses, and households in DC. It’s like they’re building a city 6 times as dense as DC in the middle of nowhere.

How bad will this be for the environment? There’s a lot to say about air pollution and climate effects, but what about the effects of the heat exhaust? Well, what are the actual effects of the heat dissipated by the energy used by DC businesses and cars and homes? Most of the ways cities increase the temperature compared to rural areas is the urban heat island effect, which has two main causes: surface changes (asphalt, dark roofs, and lost vegetation absorbing sunlight that would otherwise reflect or evaporate) and direct waste heat from human energy use.

In most US cities, the surface effect dominates. A recent estimate puts the local air temperature increase at roughly 0.025 °C per W/m² of human energy use. This varies with city size, wind, and how high the heat can rise.

Using DC’s energy consumption and size, this implies DC’s human energy usage adds about 0.4 °C to the city’s temperature. Naively carrying this forward to the largest data center ever proposed, which could eventually use 9 GW of energy (2% of the energy draw of the entire country!) and dissipate 16 GW of heat, this implies it might raise the very local temperature on its property by 2.4 °C, about 4.3 degrees Fahrenheit hotter. This is not nothing! It’s a pretty significant change. But when someone tells you “This thing is like dropping 23 nuclear bombs per day” do you immediately say “Oh yeah, like an extra 4 degrees!”? If not, the unit is bad.

2.4 degrees celcius is more than the Paris accord of keeping global temperatures rising to less than 2 degrees celcius (related to global warming and carbon emissions). What I understand from climate scientists 2 degrees would already be pretty bad, so naively 2.4 degrees without more context sounds really bad but I always find it hard with these numbers as well to get a grasp of what this would actually mean for my life.

Off-course I wouldn't mind it just being 2 degrees hotter outside for my own experience of the weather, but I have to assume this also impacts things like rainfall, plant growth and the local ecosystem, do you have a sense of how big these effects would actually be? You were talking about 2.4 degrees on its property, maybe locally enough that there are no climate impacts and 0.4 degrees to the whole city, is that the number we should care about?

Nitpick: re-entry heating for spacecraft is mostly compression not friction